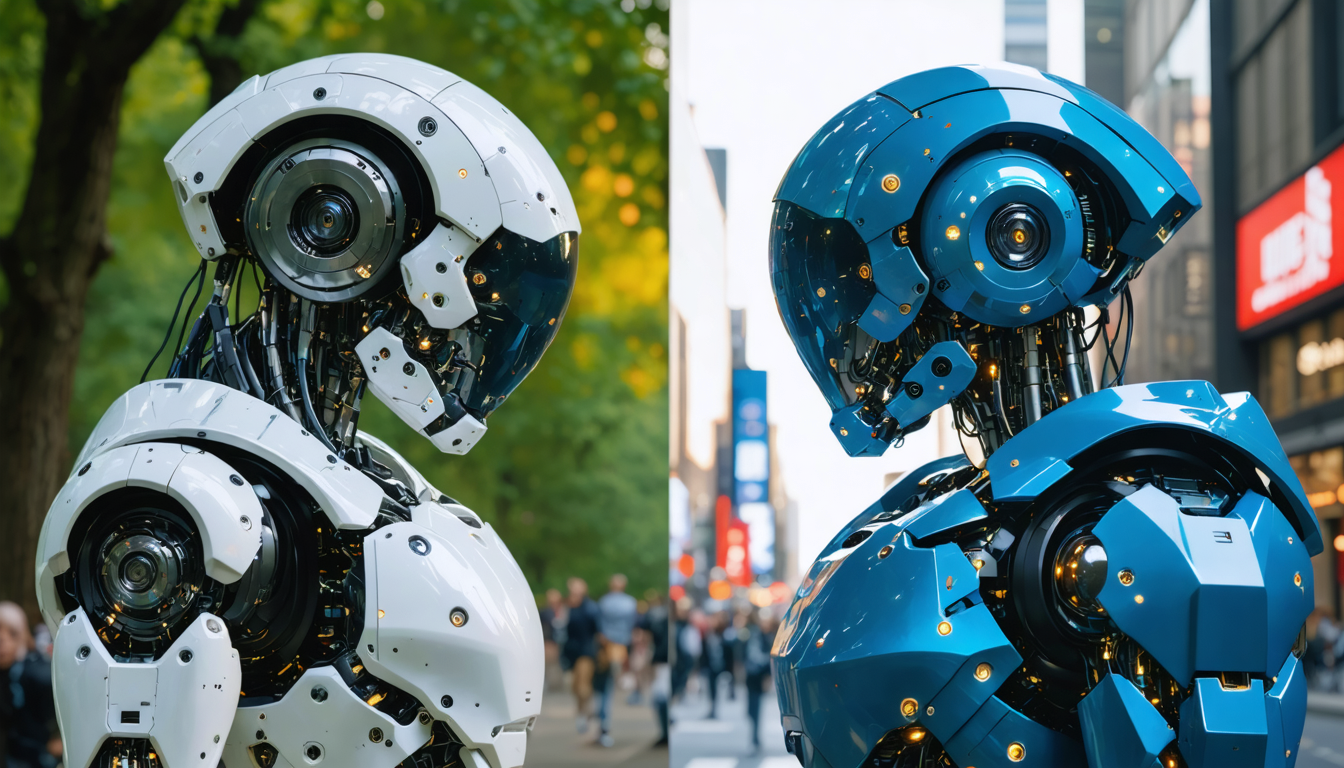

The new alliance between OpenAI and the United States Department of War raises a major debate at the beginning of 2026: can we accept that popular artificial intelligence technologies become military tools? While Anthropic, a major competitor in the AI field, categorically refuses to participate in the militarization of its models, OpenAI consents to a controversial partnership. This strategic divergence causes a real upheaval in the user community, giving rise to the “Cancel ChatGPT” movement which brings together those denouncing an ethical abandonment. More than a simple technological dispute, it is a profound fracture on the values and responsibilities that this turning point provokes. As Claude, Anthropic’s assistant, climbs to the top of the application rankings, the question of trust towards AI giants becomes crucial, with implications that go beyond the market alone and affect brand image and the future of regulation.

The militarization of mass-market AI technologies is becoming a decisive issue in users’ choices in 2026. Beyond performance or features, ethical questions, transparency, and purposes are becoming imperative. This polarized portrait between OpenAI and Anthropic also highlights broader issues, notably those of autonomous weapons control, mass surveillance, and the role of AI companies vis-à-vis government administrations. While some praise OpenAI’s willingness to have safeguards, others consider that mere consent to military use constitutes a rupture, a source of manifest disenchantment with ChatGPT. This case indirectly illustrates the latent conflict between technological innovation, commercial imperatives, and moral demands in the digital age.

- 1 Consequences of the OpenAI – Department of War agreement: “Cancel ChatGPT” movement and impact on users

- 2 Anthropic: ethics and refusal of the militarization of artificial intelligence

- 3 OpenAI’s safeguards: consent and limits to militarization, the official argument

- 4 Ethics and technology: when the general public takes up the issues of AI militarization

- 5 Reactions and stances in the artificial intelligence sector

- 6 The future: an era where ethics sustainably condition strategic partnerships in AI

- 7 Paths for an ethical and responsible integration of AI in strategic sectors

- 8 Frequently Asked Questions about the OpenAI, Anthropic, and AI militarization controversy

- 8.1 Why did Anthropic refuse the militarization of its AI?

- 8.2 What guarantees does OpenAI claim to have obtained in the military partnership?

- 8.3 What is the impact of the OpenAI – Department of War partnership on the general public?

- 8.4 Has Anthropic’s Claude benefited from the refusal of militarization?

- 8.5 What are the future challenges for the militarization of artificial intelligence?

Consequences of the OpenAI – Department of War agreement: “Cancel ChatGPT” movement and impact on users

The official announcement of the partnership between OpenAI and the US Department of War triggered an unprecedented shockwave in the artificial intelligence user community. Immediately, the hashtag “Cancel ChatGPT” emerged on social media, expressing a massive and rapid protest. This wave of uninstalls and subscription cancellations to ChatGPT Plus emphasizes that acceptance or rejection of a service is no longer limited to its technical efficiency but is now rooted in a moral judgment about the company’s values.

Committed internet users, mainly on X (ex-Twitter) and Reddit, organized the defense of their convictions by sharing detailed guides to export their data, delete their ChatGPT account, and migrate to alternatives judged more ethical, such as Anthropic’s assistant Claude. For example, one user published a complete series of video tutorials on Reddit to facilitate this transition, while several specialized blogs compared OpenAI’s policy in detail with that of Anthropic, highlighting the latter’s stance against militarization.

This is not just a passing trend. This phenomenon marks the first time that the militarization of mass-market artificial intelligence becomes a fundamental criterion in user loyalty. Indeed, some internet communities have mobilized not only to protest but also to structure a critical discourse related to the impact of AI on global security, surveillance, and risks linked to autonomous weapons.

This protest has also taken on an economic dimension: the App Store and Play Store have seen Claude, Anthropic’s AI, rise to the top of downloads, directly benefiting from ChatGPT’s moral eviction. Moreover, it is significant that this rise is sometimes accompanied by technical breakdowns, such as server overloads due to the sudden influx of new users. Thus, ethics becomes a key factor in the positioning and competitiveness of artificial intelligence solutions.

Here is a summary of the main consequences of the “Cancel ChatGPT” movement:

- Multiplication of cancellations of paid subscriptions to ChatGPT Plus.

- Creation and dissemination of educational resources to effectively leave the OpenAI platform.

- Spectacular rise of the Claude AI in application store rankings.

- Strengthening of public debate on the responsibility of AI companies regarding military uses.

- Reinforcement of polarization among users depending on their support or rejection of militarization.

Example of a guide to cancel ChatGPT Plus and migrate to Claude

The guide published by a group of users highlights that the cancellation process is simple but requires following several key steps: exporting personal data, deleting conversation history, uninstalling applications on various devices, then subscribing to Claude through official stores.

This document is not limited to a technical explanation but also highlights the nature of the ethical choice, “no longer supporting a player who agrees to provide an artificial intelligence potentially deployed on classified military networks.”

Anthropic: ethics and refusal of the militarization of artificial intelligence

Anthropic, OpenAI’s competing company and creator of Claude, has taken a firmly opposed stance towards the militarization of its artificial intelligence technologies. Rather than signing an agreement with the Pentagon, Anthropic chose to abstain, citing a lack of sufficient guarantees to prevent mass surveillance and uncontrolled autonomous weapons.

In a context where government funding can represent up to hundreds of millions of dollars, this refusal is a risky long-term bet. However, this decision is part of a quality strategy aimed at protecting the company’s reputation, security, and moral values.

Anthropic emphasizes the principle of safety as the cornerstone of its approach. The company does not completely close the door to any military collaboration but sets as a sine qua non condition the establishment of clear, controlled, and transparent safeguards before engaging. This nuance is fundamental: it represents a balance between categorical refusal and openness to dialogue on ethical rules.

According to an internal note made public, management specifies that:

“We consider that the militarization of AI without a strict and verifiable framework presents risks that are too high for society. No power should have fully autonomous weapons without robust control mechanisms.”

This positioning confers on Claude the image of an ethical tool, guaranteeing responsible and cautious artificial intelligence in its design and deployment.

The effect is felt among users. This is evidenced by the fallouts on application markets where Claude now dominates the App Store and Play Store, concrete elements illustrating the impact of an ethical strategy on consumer behavior.

Anthropic’s approach encompasses several dimensions:

- Emphasis on safety and security of deployments.

- Demand for transparency and control over military actors’ intended use.

- Acceptance of conditional collaboration, unlike a definitive break.

- Moral positioning as a competitive advantage in a sector to be monitored.

The cost of refusal: strategic and financial challenges

Refusing such a lucrative partnership with the Department of War is also a significant loss of deemed useful funding, in a sector where investment remains a priority to stay competitive. Yet, this choice illustrates a position where moral compromises are prioritized over short-term gains.

In 2026, this divergence marks a turning point in the sector’s dynamics. It triggers a redefinition of evaluation criteria for AI companies, not only based on their technical performance but also on their operational ethics.

OpenAI’s safeguards: consent and limits to militarization, the official argument

OpenAI fully assumes its agreement with the US Department of Defense. The company claims to have negotiated a framework with more safeguards than what was offered to Anthropic. It specifies that this agreement excludes applications related to mass surveillance and fully autonomous weapons.

The official text insists that military uses must be limited to operations deemed “lawful,” without ambiguity regarding respect for human rights. OpenAI also promises to closely monitor compliance with these limits.

However, the public reaction remains mixed. The expression “for lawful purposes” in a contract remains intrinsically subject to changes in the legal and political framework, which raises significant mistrust among users. They fear that a legal clause could evolve and allow questionable uses tomorrow.

Moreover, the shift made by OpenAI, which until now had supported with Anthropic a strict approach to security, shocks part of its community. This reversal fuels a feeling of ethical inconsistency that is growing in an already perceived opaque and complex sector.

Here are the major points of OpenAI’s position regarding its consent to militarization:

- Assurance of a strict framework excluding mass surveillance.

- Commitment not to develop fully autonomous weapons.

- Regular control and audits of uses within the Department of War.

- Call for pragmatic collaboration to combine innovation and security.

- Risk of variable interpretation of what is “lawful” in the long term.

Why user trust is wavering

Criticisms addressed to OpenAI are not limited to this single matter but are part of a broader context of mistrust: training data opex, significant energy consumption, complex social and professional implications. The military bias adds additional weight, weakening trust in the company’s transparency.

Ethics and technology: when the general public takes up the issues of AI militarization

This affair has marked an unprecedented shift in how the general public perceives the stakes of artificial intelligence. In the past, controversies often concerned abstract technical dimensions: algorithmic biases, data privacy, or environmental impact. Today, the issue of militarization directly involves civil society, with a strong emotional and political impact.

This change results from increased awareness of the potential consequences of unregulated use, especially in a sensitive sector like defense. Users no longer want only performance or innovation; they seek alignment with their values. They understand that AIs are not mere neutral tools but carry fundamental ethical choices within them.

This evolution forces AI developers to deeply reconsider their commercial strategy. Ethics becomes a central element to retain a user base that no longer hesitates to sanction decisions contrary to its principles.

There is also a growing political issue around the regulation of military AI. Public opinion now contributes to influencing legislative and international debates, encouraging transparency and stricter rules to avoid a frantic race for military-use technologies.

Key questions raised by AI militarization:

- The moral role of companies in designing AI technologies.

- Risks linked to loss of control over autonomous weapons.

- Extended and potentially intrusive surveillance enabled by AI.

- Informed consent and information for end users.

- The need for harmonized international regulation.

Reactions and stances in the artificial intelligence sector

Beyond OpenAI and Anthropic, the entire technology sector is under fire for criticisms regarding AI militarization. Several well-known players have had to clarify their position and publicly outline their engagement terms.

Some smaller players like Anthropic attract attention for their prudence and refusal of military contracts, favoring ethical and responsible development. Conversely, other large companies choose to collaborate with governments, arguing the need to invent safe frameworks for technologies involved in areas with high strategic value.

This polarization is also reflected in financial investment. A comparative table of various military partnerships in the sector illustrates the strategic choices of AI companies:

| Company | Military Partnership | Ethical Position | Amount (M$) |

|---|---|---|---|

| OpenAI | Yes, with US Department of War | Consent with safeguards | 200 |

| Anthropic | No, prudential refusal | Rejection without agreement | 0 |

| Google DeepMind | Limited collaboration, surveillance | Mixed position, increased control | 150 |

| Microsoft | Integration of AI to US armed forces | Strict consent | 180 |

Technology investors, such as Aidan Gold, also publicly express the moral dilemma OpenAI lived before signing the agreement. They notably point out the irony of a temporary public support for Anthropic’s position on security before the sudden change of course.

The future: an era where ethics sustainably condition strategic partnerships in AI

The OpenAI-Anthropic case highlights the rise of a new era where militarization of AI becomes a true moral test. Companies can no longer rely solely on technological promise: they are now judged also on their ability to align their strategic decisions with ethics recognized by their users.

Recent examples show that this demand is not a barrier but an opportunity. For Anthropic, refusing a major government contract reflects strength of character and a clear willingness to position itself internationally as a responsible player.

For OpenAI, the challenge is more complex. Time will tell if consent to this military partnership, with its restrictive clauses, will manage to convince the community and sustain trust. In any case, the battle for ethics is now at the very heart of corporate strategies.

This turning point also opens the way for enriched dialogue between public authorities, private actors, and civil society. Pressure to implement international regulations on AI and its military uses intensifies alongside popular reactions. The market can no longer ignore this ethical dimension without risking a lasting fracture of its user base.

Artificial intelligence, far from being a simple tool, must now be considered as a whole whose every technical evolution entails major societal responsibilities, subject to informed and collective consent.

Paths for an ethical and responsible integration of AI in strategic sectors

To guarantee a balance between technological innovation and moral demands, several paths can be considered:

- Establish strict international standards regulating the use of AI in the military domain, notably regarding autonomous weapons and surveillance.

- Strengthen transparency of contracts and usage purposes between companies and administrations.

- Include end users in ethical debates, through consultation and active information.

- Develop third-party audit systems allowing verification of compliance with commitments.

- Favor partnerships between researchers, regulators, and industries to align development and control.

- Adopt clear communication to reject ambiguities and preserve trust.

Here is a synthetic table of risks, challenges, and possible solutions related to the militarization of AI:

| Risks | Challenges | Possible solutions |

|---|---|---|

| Uncontrolled autonomous weapons | Global security, international stability | Implementation of treaties, clear bans |

| Intrusive mass surveillance | Respect for civil rights, privacy | Rigorous legislation, democratic control |

| Loss of user trust | Social and commercial acceptability | Transparency, dialogue, education |

| Use for illegal purposes | Ethics and legality | Independent audits, sanctions |

Frequently Asked Questions about the OpenAI, Anthropic, and AI militarization controversy

Why did Anthropic refuse the militarization of its AI?

Anthropic opposed the militarization of its AI by the Pentagon due to insufficient guarantees on preventing mass surveillance and controlling autonomous weapons. The company favors engagement only under strict safeguards to ensure safety and security.

What guarantees does OpenAI claim to have obtained in the military partnership?

OpenAI claims that its agreement excludes uses related to mass surveillance and fully autonomous weapons, and provides for strict control as well as a legal framework limiting military uses to lawful purposes.

What is the impact of the OpenAI – Department of War partnership on the general public?

The partnership triggered a protest movement called ‘Cancel ChatGPT’ where users left the platform for alternatives considered more ethical, placing the moral issue related to AI and its military use at the center of debates.

Has Anthropic’s Claude benefited from the refusal of militarization?

Yes, Claude made a strong rise in application rankings, especially on the App Store, thanks to its image as a more ethical AI, illustrating the importance of the ethical factor in user choices.

What are the future challenges for the militarization of artificial intelligence?

Future challenges concern establishing international standards, transparency of partnerships, user participation in ethical debates, and development of audit systems to prevent abuses and maintain trust.